TLDR; create a .dockerignore file to filter out directories which won’t form part of the built image.

Long version: working on the upcoming Kubernetes course, with a massive deadline looming over me [available at VirtualPairProgrammers by the end of May, Udemy soon afterwards], the last thing I need is a simple docker image build freezing up, apparently indefinitely!

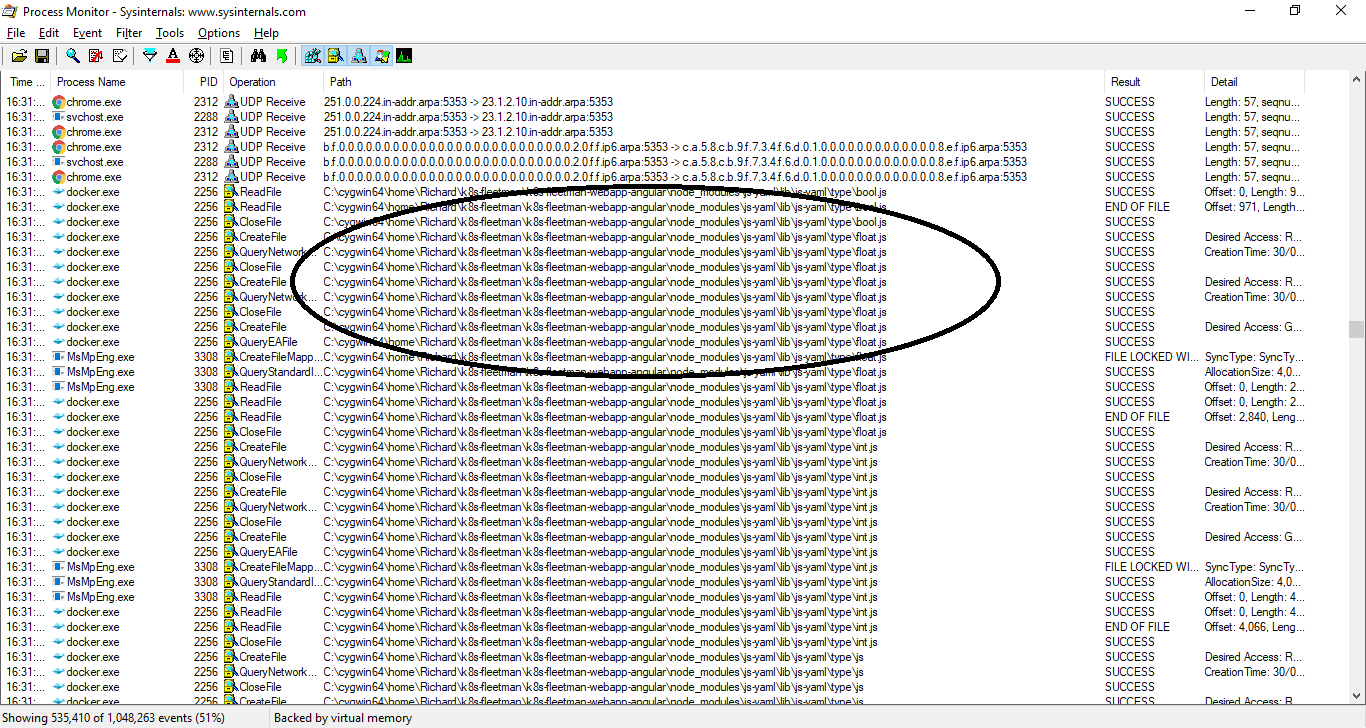

A quick inspection of running processes with Procmon (I’m developing on Windows) showed a massive number of files being read and closed:

This just a small extract from the process log.

This [every file in the repository being visited] is normal behaviour of the docker image build process – I guess I’ve just been working on repositories with a relatively small number of files (Java projects). This being an Angular project, one of the folders is “/node_modules” which contains a masshoohive number of package modules – most of which aren’t actually used by the project but are there as local copies. This directory can be easily regenerated and isn’t considered part of the project’s actual source code. [edit to add, it’s the equivalent of a maven repository in Javaland. The .m2 directory is stored outside your project, so this isn’t a concern there].

Turns out, the /node-modules folder contained 33,335 files whilst the rest of the project contained just 64 files!

Routinely, we .gitingore the /node_modules folder, and of course it makes sense to ignore this directory for the purposes of Docker also.

Simply create a folder in the root of the project, .dockerignore:

$ cat .dockerignore /node_modules

You might also consider adding .git to this ignore list.

Now my docker image build is taking a few seconds instead of several hours. Perhaps I might meet this deadline after all…

stumbled on this while waiting…

massively helpful

I have the same directory layout for every image I create:

$image_name

$image_name/files/

$image_name/Dockerfile

As you guessed, $image_name/files holds all the files that will get ADDed or COPYed in the image during the build. That directory never grows too big in terms of numbers or size (always less than 100 files and 15, 25MB).

Yesterday everything worked fine, today not a single image builds.

So I did this:

cat < $image_name/.dockerignore

**

!files/

EOF

And now everything works ?! It does not make sense.

Wow that sure doesn’t make sense. So you’ve set it to ignore everything except /files? Which should be excluding just the home dir and the Dockerfile. You sure there isn’t a hidden directory or something that’s got big?

Feels like something else weird has changed overnight but I can’t think what that could be!

No, not /files, but files/

files/ is on the same level as the Dockerfile.

BTW.. builds have re-started to hang, so it had not fixed anything.

This is on Fedora 35, with BuildKit.

I have another box running Ubuntu 20.10, the docker binaries are at the same version, BuiltKit enabled, too, and nothing hangs (…so far; I do not use it that often to build images).

I have wiped the Fedora 35 box and reinstalled.. And builds still hang, so there’s something about Fedora, for sure.

Sorry, misread your comment, so yes, you’ve described it properly.

There is no hidden directories. It will alway fail at the same stage, during an apk add command (almost always the same package, too). It does not matter if it’s a 70MB nginx image, or a 800MB Jenkins one, it hangs, period.

I have been using your docker course for the last 3 months. Thank you for the course. It provide the crystal clear details on what is docker, how can it be used.

Once again thank you so much for the course

Ah many thanks glad the course is useful!