Microservices – it’s nearly here!

We’re approaching the end of an intense, exhausting but fun few weeks recording the new release, Microservices with Spring Boot. I’ll put up a proper announcement with a preview video when it’s finally out.

In the meantime, a few words about the thought process behind the course: whilst internally at VirtualPairProgrammers, we use Kubernetes for much of the orchestration of the microservices, we wanted a course that would integrate closely with our Spring and Spring Boot lines, which took us down the path of using Spring Cloud.

Spring Cloud, as you’ll see on the course, is a superb collection of components, mainly derived from the work done at Netflix, which offers features such as service discovery, load balancing, fail safety/cirtcuit breaking and so on.

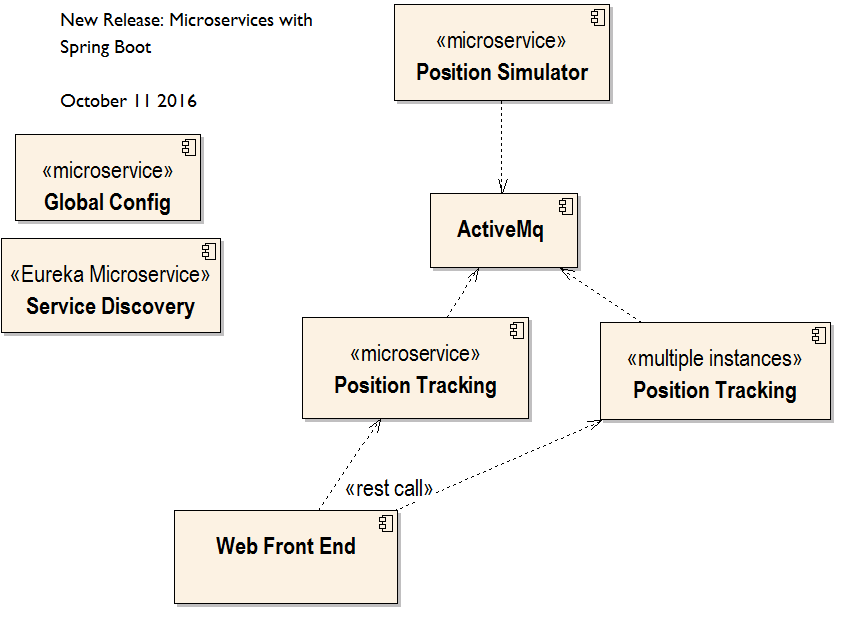

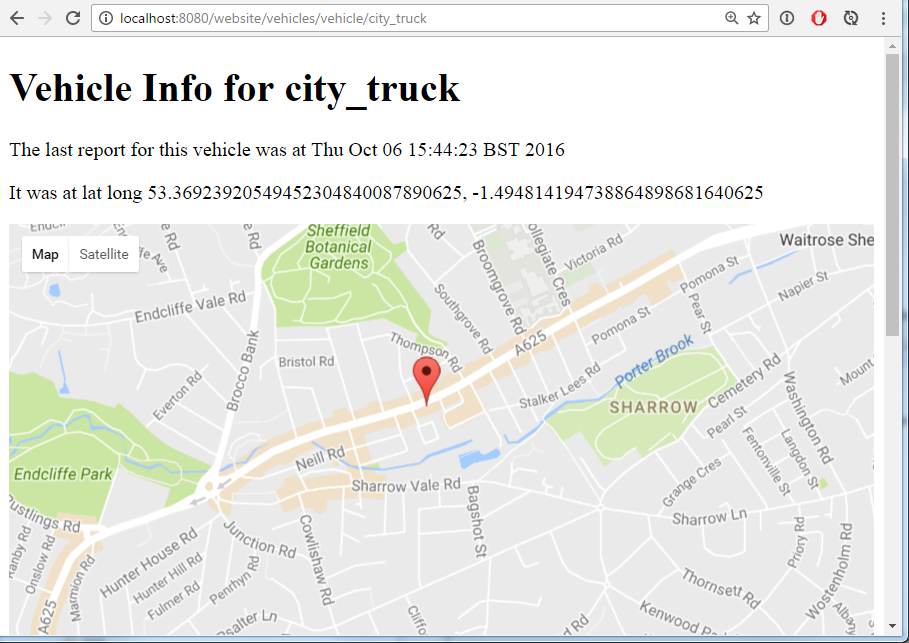

Whether you want to use Spring Cloud or not, the course is an in-depth starter to microservices. You’ll be managing a small set of microservices which collaborate to provide a vehicle tracking front end:

What’s In?

- An introduction to Microservices

- Messaging with ActiveMq

- Running a Microservice Architecture

- Service Discovery with Netflix Eureka

- Client Side Load Balancing with Ribbon

- Circuit Breakers with Hystrix

- Declarative REST with Feign

- Spring Cloud Config Server

What’s out?

This is only a “getting started”, and I realise that the course ends at a point where there are still many questions to answer. Mainly, how to deploy the architecture to live hardware – as discussed in previous blog posts, automating the entire workflow is so important.

For this course, I wanted life to be as simple as possible – the downside of this is that it did mean running a lot of Microservices locally (all in separate eclipse workspaces), and a lot of faffing stopping/starting queues, etc. In a way, I hope this helps – it should make people realise that managing microservices is much harder than managing a monolith.

This was a hard decision to make for this course – I wanted it to be as production grade as possible (read: not a hello world app), without it being daunting and off putting. I think at certain points in the course, it does get hard when we’re constantly switching from one microservice to the next, stopping and starting services and generally trying to juggle several things at once. All of this in a true production would be automated and scripted – but I didn’t want that to get in the way of the learning.

So there will be a follow on, where we use configuration management to automate the deployment to live hardware (it will be AWS as that’s what we’re comfortable with). We’ll also use Virtual Machines to allow developers to run full production rigs on local development machines.

Also missing from this course, which I very much wanted to include, were Spring Cloud Bus (Automatic re-distribution of system wide properties) and Zuul (API gateway); these were lost mainly due to timescale pressures, and that the sample system couldn’t really support their use. We’ll be looking at these topics in the very near future.

Also, since this is a fast moving area, I expect that we will have to update the course in the near future anyway, so we’ll be releasing much more on this topic soon!

The “Microservices part 2: Automating Deployment” (working title) is aimed to be out late November/early December. It’ll feature Ansible, Docker (probably) and lots of automated AWS provisioning, including a healthy slice of AWS Lambda. Can’t wait!

Richard Thank you for this post. I'm eagerly expectant of your course on microservices.Can't wait!

Thanks for your kind words – I hope it's worth the wait – I think that part two of the course will probably have the most value but I guess it depends how much Dev and how much Op you are 😉

Thank you very much Richard for doing the best again.Already started going through the microservices course.Part 2 is going to be even more interesting i believe.

Thank you so much for your efforts to do this course, I would like to ask you if you provide a session on how to deploy a spring boot application on AWS

Thanks! Well, I don't know as it's not recorded yet, but I hope part 2 will be at least useful!

Definitely Youssef – that's the plan. I'm not sure exactly what will be in, but my "mind map" at present contains Ansible (for automatic provisioning and deployment), Jenkins, Docker (this is 50/50, it might be too much), all deploying to EC2 instances. It should be an easy win to include AWS Lambda as well (the position simulator is made for the job). So all should be fun.

BTW if you're looking for just how to deploy a regular Boot app, that would be exactly as on our existing AWS course, but I wouldn't bother running Tomcat, just build an executable WAR file and run from a script on an EC2 instance with Java installed.

Thanks Richard.I am mostly interested on the CI/CD part.